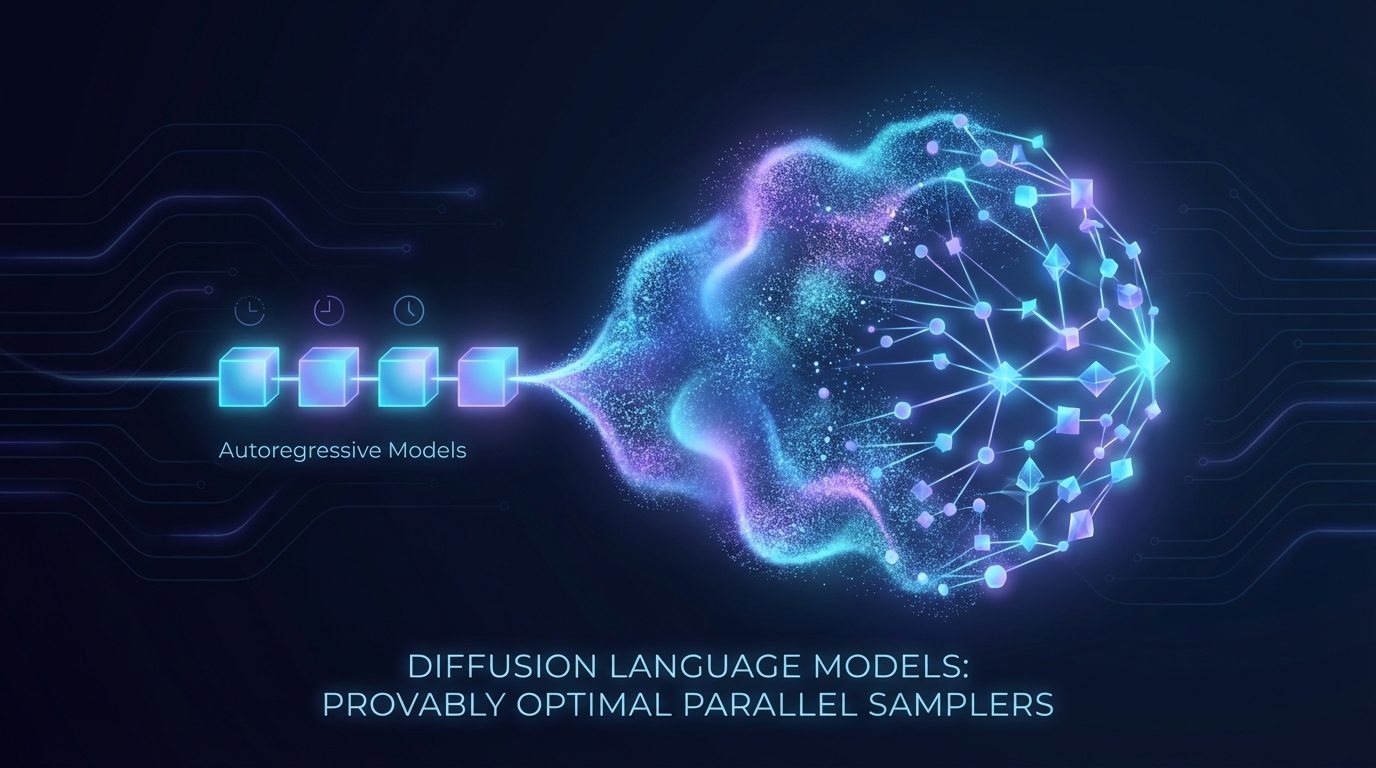

Diffusion Language Models are Provably Optimal Parallel Samplers

Recent research highlights the efficiency of diffusion language models (DLMs) in parallel token generation, challenging traditional autoregressive models. By formalizing a parallel sampling model, the study proves that DLMs with polynomial-length chain-of-thought can match optimal sequential steps of parallel algorithms. However, without modifications to revealed tokens, DLMs can have significant intermediate footprints. Introducing remasking or revision methods allows DLMs to maintain optimal space complexity and enhances their expressiveness. This research underscores the potential of DLMs as superior parallel samplers and advocates for incorporating revision capabilities.