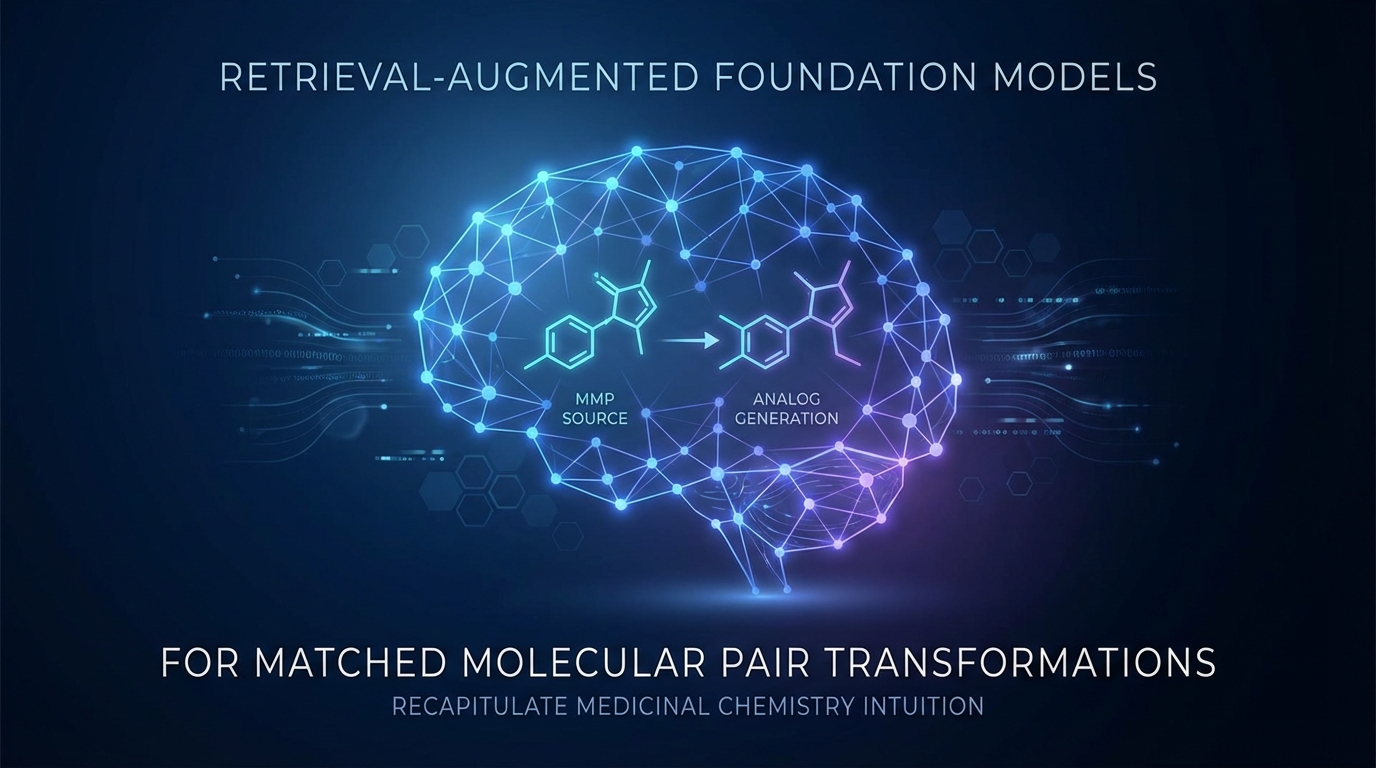

Retrieval-Augmented Foundation Models for Matched Molecular Pair Transformations to Recapitulate Medicinal Chemistry Intuition

Researchers have developed a new foundation model for generating chemical analogs using matched molecular pairs (MMPs). This model allows for diverse variable generation based on user-defined transformation patterns, enhancing controllability. The method, named MMPT-RAG, incorporates external references to improve contextual relevance. Experiments indicate significant advancements in diversity and novelty of generated compounds, making it a valuable tool for medicinal chemistry in practical drug discovery.