Overcoming Compute and Memory Bottlenecks with FlashAttention-4 on NVIDIA Blackwell

Image generated by Gemini AI

Transformer architecture is pivotal in the rise of generative AI, enabling large language models (LLMs) such as GPT, DeepSeek, and Llama. This architecture enhances processing efficiency and contextual understanding, leading to significant advancements in natural language processing tasks. The implications for AI applications are profound, as transformers allow for more nuanced and responsive interactions in various sectors, from customer service to content creation.

Overcoming Compute and Memory Bottlenecks with FlashAttention-4 on NVIDIA Blackwell

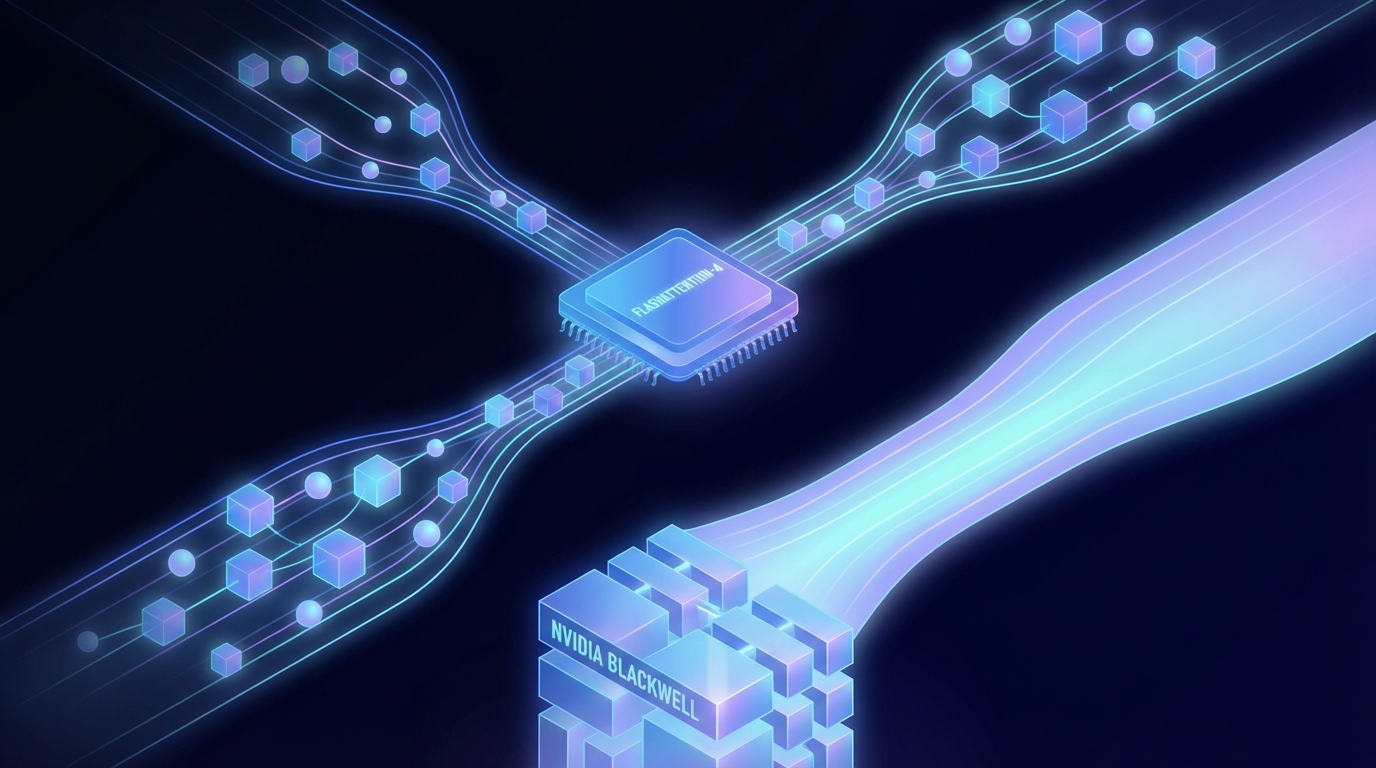

NVIDIA has unveiled FlashAttention-4, an advancement aimed at optimizing compute and memory efficiency for transformer models, particularly for large language models (LLMs) like GPT and Llama.

FlashAttention-4 addresses challenges in traditional attention mechanisms, which often face limitations in memory usage and computational speed as model sizes grow. This implementation allows for larger models to be trained and deployed more efficiently.

Key features of FlashAttention-4 include:

- Improved Memory Efficiency: Reduces the memory footprint for attention computations, enabling larger models to fit into existing hardware.

- Enhanced Speed: Significantly accelerates training and inference processes for transformer models.

- Seamless Integration with Blackwell: Designed to take full advantage of the enhancements offered by the upcoming Blackwell GPUs.

Early benchmarks indicate that models utilizing FlashAttention-4 achieve higher throughput rates, reducing the time and resources needed for training. As demand for powerful AI models rises, this advancement could lead to significant improvements in natural language understanding and generation capabilities.

Related Topics:

📰 Original Source: https://developer.nvidia.com/blog/overcoming-compute-and-memory-bottlenecks-with-flashattention-4-on-nvidia-blackwell/

All rights and credit belong to the original publisher.