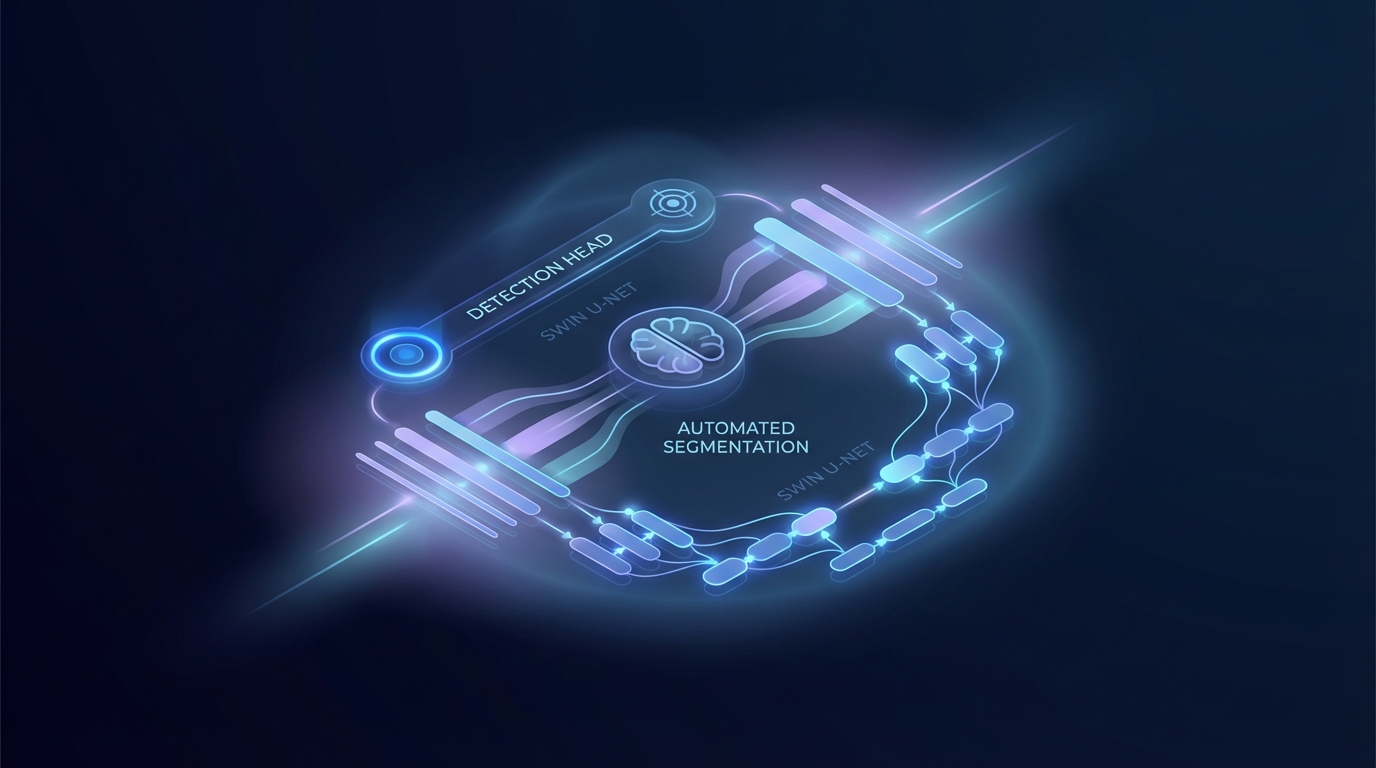

Multi-head automated segmentation by incorporating detection head into the contextual layer neural network

Image generated by Gemini AI

A new gated multi-head Transformer architecture, based on Swin U-Net, improves auto-segmentation in radiotherapy by integrating inter-slice context and a parallel detection head. This model effectively reduces false positives, achieving a mean Dice loss of $0.013 \pm 0.036$ compared to $0.732 \pm 0.314$ for traditional methods. This advancement enhances the reliability of automated contouring in clinical settings.

New Multi-Head Transformer Model Enhances Automated Segmentation in Radiotherapy

A novel gated multi-head Transformer architecture, based on Swin U-Net, has shown significant improvements in automated segmentation for radiotherapy applications. This model addresses the occurrence of false positives in slices that lack target structures.

The proposed architecture integrates inter-slice context and employs a parallel detection head to enhance performance. This dual approach enables slice-level structure detection while conducting pixel-level segmentation via a context-enhanced stream. The detection outputs gate the segmentation predictions, effectively suppressing false positives in anatomically invalid slices.

Experimental Results

Experiments on the Prostate-Anatomical-Edge-Cases dataset revealed that the gated model significantly outperforms a non-gated segmentation-only baseline. The gated model achieved a mean Dice loss of $0.013 \pm 0.036$, compared to $0.732 \pm 0.314$ for the non-gated model. Furthermore, the gated model's detection probabilities exhibited a strong correlation with actual anatomical presence, effectively eliminating spurious segmentations.

In contrast, the non-gated model displayed greater variability and persistent false positives, indicating a lack of robustness in its predictions. These findings highlight the advantages of detection-based gating in automated segmentation, enhancing both robustness and anatomical plausibility.

Related Topics:

📰 Original Source: https://arxiv.org/abs/2602.02471v1

All rights and credit belong to the original publisher.