Exploring Transformer Placement in Variational Autoencoders for Tabular Data Generation

Image generated by Gemini AI

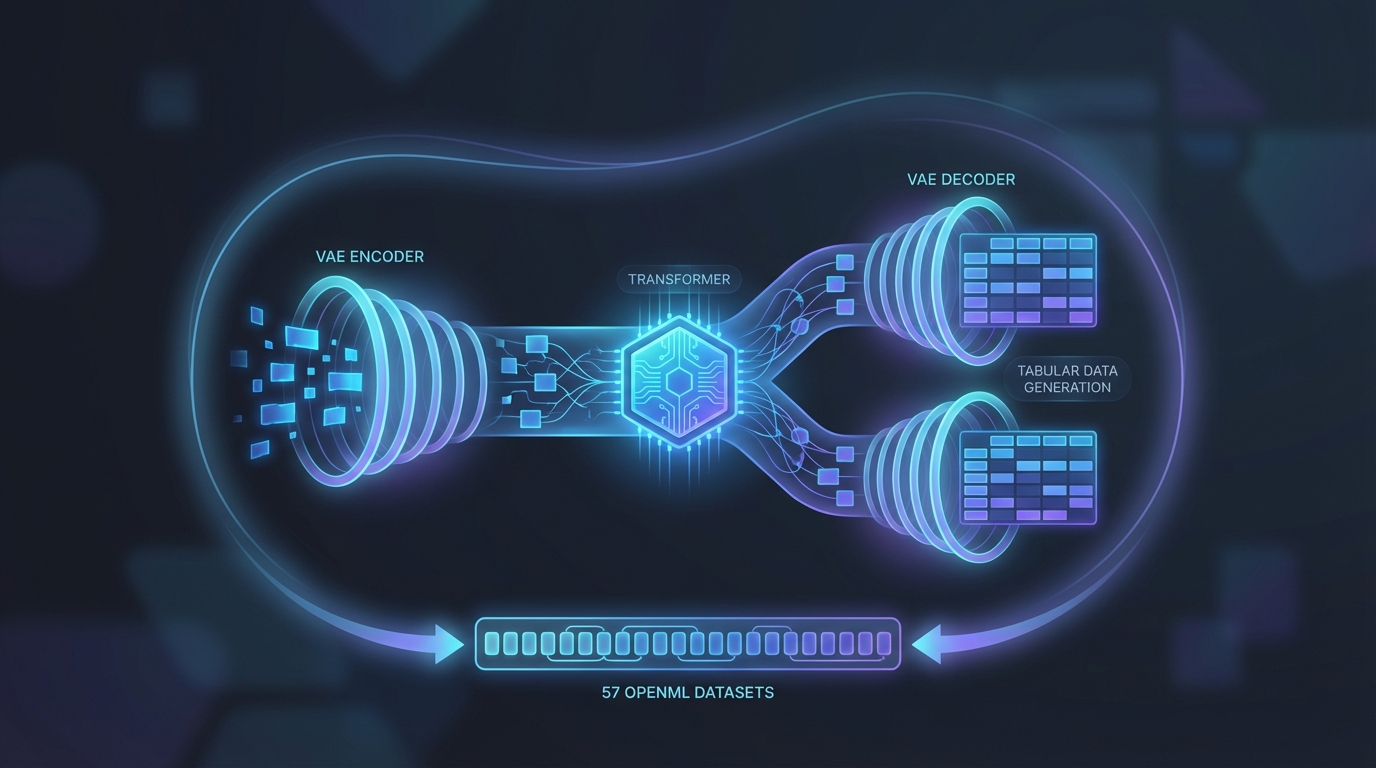

A study investigates the integration of Transformers into Variational Autoencoders (VAEs) to enhance their performance in tabular data modeling. Testing on 57 OpenML CC18 datasets, researchers found that placing Transformers in latent and decoder components creates a trade-off between fidelity and diversity. Additionally, they noted that the decoder's input-output relationship is nearly linear, revealing consistent patterns in Transformer blocks.

Transformers Enhance Variational Autoencoders for Tabular Data Generation

Recent research reveals the potential of integrating Transformer models into Variational Autoencoders (VAEs) for generating tabular data. Analyzing 57 datasets from the OpenML CC18 suite, the study provides insights into the structural adaptations of VAEs with Transformers.

Key Findings from the Study

The study highlights two primary conclusions:

- Transformer Placement: Positioning Transformers within latent and decoder representations creates a trade-off between fidelity and diversity in generated data.

- Block Similarity: Significant similarity is observed between consecutive blocks of the Transformer, particularly in the decoder, where the input-output relationship approximates linearity.

These findings suggest that while Transformers enhance the generative capacity of VAEs, careful placement is essential to balance quality and variability in generated data.

Related Topics:

📰 Original Source: https://arxiv.org/abs/2601.20854v1

All rights and credit belong to the original publisher.